Cyber Security Risk Assessment 2019

Rapid developments in the field of artificial intelligence are creating new risks, but also offer new opportunities for cyber security. Research into artificial intelligence (AI) is flourishing; for example, algorithms are becoming increasingly effective in recognising patterns and making decisions in complex situations. Some AI applications may also lead to new or greater risks for cyber security. An example of such a risk is the deep fake technique, in which AI is used in the production of fake photographs and videos. The technique could be exploited by nation-state actors to spread disinformation. Cyber criminals can also use deep fake in identity fraud and spear phishiakeng (i.e. sending targeted and personalised e-mails in order to unlawfully obtain or hack into information).

Downloads

Artificial intelligence can also be used in rapid and systematic searches for software vulnerabilities, with the aim of subsequently exploiting those vulnerabilities. On the other hand, AI also offers opportunities for cyber security. It can be used to help detect deep

fake products and other forms of disinformation, DDoS attacks and rogue websites. The possibilities for detecting software vulnerabilities can be used by software developers, thus preventing vulnerable software from entering the market.

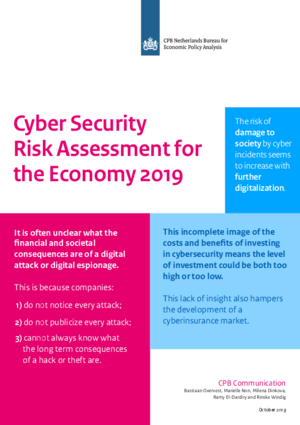

Uncertainty and incomplete information are hampering cyber security policy. Various opinions and guidelines are circulating on the optimal investment level for cyber security and on what measures should be taken. Nevertheless, or precisely because of that, it is difficult for individual users and organisations to make informed decisions about which measures to take. This is partly due to the fact that existing opinions and guidelines are never an exact match for the situations of specific users and organisations, and partly due to a lack of information about the extent of cyber risks and their financial consequences. And finally, some of the consequences of inadequate cyber security may have an impact on third parties. This lack of information prevents users from determining the benefits of their investment in security. It also forms an obstacle for government policy: to what extent would it be desirable to stimulate private and public investment in cyber security? For cyber insurers, the lack of information means that premiums cannot be properly risk-based.

The fragmented landscape of initiatives on collaboration and education may hamper the provision of information. The government uses multiple channels to informs various target groups about cyber risks. In addition, it seeks to stimulate various public–private partnerships to encourage participants to share knowledge and experiences. In certain cases, these initiatives on collaboration and education may overlap. The risks of this type of fragmentation include those of target groups being less aware of where relevant information can be found, of initiatives duplicating each other’s work, and of important themes being left unaddressed.

Authors